Welcome to the SDC Practitioner Certification Program

You're about to learn the skills to diagnose data infrastructure problems, prescribe deterministic solutions, and build a compounding consulting practice on the SDC ecosystem.

Who this is for

Independent consultants, data architects, knowledge graph practitioners, and internal IT leaders who want to deploy deterministic data infrastructure at client sites.

What you'll be able to do

Run a Maturity Map assessment, build semantic data models in SDCStudio, deploy SDCforSMB applications, prescribe governance interventions, and price engagements using the compounding cost curve.

Time commitment

9 modules, 14-16 hours total, self-paced. Includes one hands-on lab (Module 8) and a capstone proposal (Module 9).

What you need

An SDCStudio account with a $10 wallet activation. A laptop for the Module 8 lab. Curiosity about why data breaks.

Module 1: Semantic Data Fundamentals

Learning Objectives

- Explain why data quality is the floor constraint on every other digital initiative.

- Distinguish syntactic interoperability from semantic interoperability.

- Describe why purely top-down and purely bottom-up modeling approaches both fail.

- Summarize the two-level modeling pattern (reference model + domain constraints).

- Apply the minimum knowledge modeling principle to component design.

- Recognize why structural identity must be decoupled from descriptive labels.

- Identify the role of the SDC specification in the broader semantic data landscape.

- Explain how SDC models produce context graphs with structural governance and why this matters for client conversations.

1.1 The Floor Constraint Core

Every SMB conversation about "AI" or "data strategy" eventually hits the same wall: the data layer is ambiguous, brittle, or untrusted. You can buy the best model, the slickest BI tool, or the most expensive practitioners - none of it matters if the underlying data cannot be reliably interpreted.

The Floor Constraint Principle

An organization's effective digital maturity is capped by the weakest of its foundational data dimensions. You cannot build a 5-story building on a 1-story foundation.

This is why the Maturity Map starts with the foundational dimensions (Schema Integrity, Constraint Enforcement, Semantic Identity) and treats them as gating. A client scoring Level 4 in Governance but Level 1 in Schema Integrity is functionally at Level 1 - the governance is governing chaos.

Reflection

Think of a current client. What happens when two of their systems disagree about a customer record? Who reconciles it? How long does it take? What does it cost?

Real-world example: Knight Capital, 2012

Knight Capital deployed a software update that reactivated dead code referencing an old schema. In 45 minutes, the system executed erroneous trades that cost $440 million. The root cause was a single schema deployment error - the code referenced field definitions that no longer meant what they meant when the code was written. Schema Integrity at its most expensive.

1.2 Syntactic vs Semantic Interoperability Core

Syntactic interoperability means two systems can exchange bytes. JSON in, JSON out. CSV in, CSV out. The pipes connect.

Semantic interoperability means two systems agree on what those bytes mean. When System A says bp: 120, does System B know that means systolic blood pressure in mmHg, taken seated, on the patient's left arm, by a calibrated cuff, at 9:14am?

Most "integration" projects deliver syntactic interoperability and call it done. Then six months later someone discovers the imported data is unusable because the meaning was lost in translation.

Example

A medical practice integrates its EHR with a billing system. Both systems exchange patient_dob. The EHR stores it as YYYY-MM-DD in the patient's local timezone. The billing system stores it as a UTC timestamp at midnight. After daylight saving, 3% of patients are billed under the wrong birth date for insurance verification. The pipes worked. The meaning didn't.

Mini-exercise (2 min)

Look at Atlas Legal's intake brief. Dana Okafor says "a client's name is spelled three different ways across Clio, QuickBooks, and the Access database." Is this a syntactic interoperability problem or a semantic interoperability problem? Why?

Answer: It is a semantic identity problem - the bytes transfer fine (syntactic works), but the receiving system cannot determine that "Jane Smith", "J. Smith", and "Smith, Jane" are the same entity. The meaning is lost because no shared identifier exists.

1.3 Why Top-Down Fails Core

The classical enterprise architecture approach: define a canonical model first, then force every system to map to it.

Why it fails in the wild

- The canonical model is always wrong on day one because nobody knows everything about the domain

- Mapping every legacy system is a multi-year project that loses political support before completion

- Real-world data has exceptions the canonical model didn't anticipate

- The model becomes a museum piece maintained by a small team disconnected from the systems that produce data

Top-down is not stupid. It is correct in spirit. It just cannot be executed against a moving target with finite budget.

1.4 Why Bottom-Up Fails Core

The opposite approach: let every system define its own data, then use ML/heuristics to reconcile after the fact.

Why it fails

- The reconciliation problem is exponential in the number of systems

- Probabilistic matches are not auditable for compliance

- New systems require re-training the reconciliation layer

- "Good enough" matches accumulate semantic drift over time

Bottom-up is also not stupid. It respects reality. It just cannot guarantee correctness when correctness matters (regulatory, financial, clinical).

Real-world example: HealthCare.gov, 2013

HealthCare.gov's launch failed in part because 36 state health insurance exchanges each defined their own data schemas, and the federal hub attempted to reconcile them with probabilistic matching at integration time. The result: enrollment records that couldn't be verified, eligibility determinations that contradicted each other across systems, and a $2.1 billion remediation effort. Bottom-up reconciliation at scale, under regulatory pressure.

Mini-exercise (2 min)

Atlas Legal tried to integrate Clio and QuickBooks once. The accountant gave up because "the client records didn't match." Was this a top-down failure or a bottom-up failure?

Answer: Bottom-up. Both systems defined their own client records independently. The integration attempt assumed reconciliation would work. It didn't, because there was no shared identifier or schema to match against. Atlas Legal never had a canonical model at all.

1.5 Two-Level Modeling Core

The synthesis: separate the reference model (stable, slow-changing, generic primitives) from the domain constraints (fast-changing, specific to a use case).

- Reference model: a small set of well-defined primitives -

Quantity,Coded Value,Identifier,Time Point,Person. These rarely change. - Domain constraints: rules that specialize the primitives for a context - "systolic BP is a Quantity with unit mmHg, range 40-300, taken in posture X by role Y."

Domain modelers (clinicians, accountants, lawyers) author constraints in their own vocabulary. The reference model guarantees those constraints compose with constraints from other domains because they all bottom out in the same primitives.

This is the architecture behind openEHR, ISO 13606, and the SDC specification. It is the only modeling approach that has survived contact with real enterprises at scale over decades.

1.6 Minimum Knowledge Models Intermediate

Two-level modeling tells you how to layer a model. It does not tell you how big each layer should be. The second core principle of the SDC approach answers that question.

Most modeling standards - FHIR, NIEM, HL7, SNOMED CT compositional grammar, and many others - try to model concepts maximally. Every possible attribute that might apply to a concept is added to the definition. The intellectual appeal is "we are being thorough." The practical cost is borne by every implementer for the rest of the standard's life: hard to author, hard to govern, hard to query, hard to validate, hard to map.

SDC takes the opposite approach.

A model component captures only what is essential to identify the concept and distinguish it from its nearby concepts.

Not every concept in the universe - just the ones close enough to be confused with it. Specificity beyond that minimum is achieved through composition with other minimum components at use time, not by inflating any single definition.

A concrete example: blood pressure

A maximal model of blood pressure tries to enumerate every cuff type, every patient position, every measurement device, every reference range, every confounding factor - because somewhere, someone needs each of those. The resulting cluster is enormous. Most attributes are never populated for any given measurement. The cluster is hard to author, hard to govern, hard to query, hard to validate, and hard to map to anything else.

A minimum model of blood pressure captures only what makes it blood pressure and distinguishes it from pulse pressure, arterial pressure, or intracranial pressure. Cuff type, patient position, and measurement device are separate components, composed in only when a specific deployment actually needs them. A clinic that never records patient position never deals with the patient-position component. A research study that does compose it explicitly. Both clinic and research study use the same blood pressure component.

Why maximal modeling breaks even with good people

The openEHR Clinical Knowledge Manager (CKM) is a community-driven archetype library built by people who care deeply about correctness. It still depends on a committee-approval workflow for every change. The bottleneck is not bureaucracy - it is the modeling philosophy. Maximal-modeling pressure makes every archetype contentious because every contributor wants to add their specialization, every reviewer wants to defend the boundaries, and the resulting artifact needs human ratification at every step.

A maximal-modeling philosophy generates governance burden that no community can outrun.

SNOMED CT compositional grammar (the IHTSDO post-coordination approach) is the same lesson at industry-standards scale. Beautiful in theory, brutal at the data management layer. Practitioners who have lived through either of these will recognize the pattern immediately and find SDC's discipline liberating.

Real-world example: openEHR CKM

The openEHR Clinical Knowledge Manager has been developing archetypes since 2007. A single archetype change can take 18 months from proposal through community review to publication. The bottleneck is not bureaucracy or incompetence - it is the modeling philosophy. When every archetype tries to capture every possible use case, every stakeholder has legitimate reasons to contest every change. SDC's minimum knowledge approach eliminates this bottleneck by keeping components small enough that there is nothing to argue about.

Mini-exercise (3 min)

Atlas Legal's paralegal Linda Reeves built an Access database with a client_name field that contains first name, last name, business name, and sometimes a spouse's name - all in one column. Is this a minimum knowledge modeling violation? What would the minimum knowledge approach look like?

Answer: Yes. A minimum knowledge approach would have separate components for PersonName, BusinessName, and SpouseName - each capturing only what distinguishes it. Composed together when needed. Linda's field is a maximal model of "name" that conflates multiple concepts into one column, making it impossible to query, validate, or reuse any individual concept.

Where the locus of specificity sits

The deepest implication of minimum modeling: the locus of specificity is composition, not definition. A maximal model puts specificity in the definition - every possible context baked in. A minimum model puts specificity in the composition - you compose blood-pressure + measurement-device + patient-position when and only when you need that specificity.

This is what makes SDC components reusable across engagements in a vertical practice. The minimum components are stable and shared across every client. The compositions are the local variation that captures each client's specific needs. The practitioner's library compounds because the components themselves do not over-fit to any single client.

Why structural identity is decoupled from descriptive labels

A natural failure mode of maximal modeling is to use the descriptive label as the structural identifier. A concept becomes blood_pressure_systolic_seated_left_arm_calibrated_cuff_2023_revision - the label and the structural identity are the same string, baked into every reference, every query, every export. This is convenient until you need to change anything: rename the concept, translate to another language, improve the wording, refine the meaning. Every change breaks every consumer.

The minimum knowledge modeling discipline forced the SDC ecosystem to a different answer. A component's CUID2 is permanently bound to its label. Every component is identified by a CUID2 - a stable, opaque, machine-generated identifier. The label is not a description that can be edited - it is a fixed part of the component's identity. If you need the same concept expressed in a different language or with different wording, you create a new component with its own CUID2. The two components are then reconciled through their shared semantic links to standard vocabularies - for example, both bind to the same SNOMED code or LOINC term. The CUID2 carries the structural identity. The vocabulary bindings carry the cross-component equivalence.

Why CUID2?

UUID v1 leaks timestamp and MAC address. ULID is sortable but reveals creation order. CUID2 is collision-resistant, URL-safe, reasonably short, and does not leak metadata. It is the kind of identifier you can put in a URL, a graph triple, or a JSON-LD @id and trust will mean the same thing in 20 years.

This is what makes component reuse practical. Because a component's meaning is locked at creation - its label, its constraints, its vocabulary bindings - any system that consumes it gets the same semantics every time. With minimum knowledge modeling, most components are simple enough that they never need to change. You model the concept once, correctly, and reuse it everywhere. If your domain needs evolve, you create new components rather than mutating existing ones. The library grows; existing components stay stable.

No Migration. Ever.

This immutability has a profound long-term consequence: your data never needs migration. In conventional systems, when a schema changes, existing data must be migrated to match the new structure - or it becomes orphaned. In SDC, old components and old data models remain valid forever. Data created against a component today will validate against that same component in ten years. If your domain evolves, new components are created alongside the old ones. The old data stays valid against the old model. No migration scripts. No forced upgrades. No "sunset" deadlines.

For practitioners coming from relational databases, EHR systems, or enterprise platforms, this is a fundamental shift. You are accustomed to schema changes breaking downstream systems and triggering expensive migration projects. In SDC, that entire category of problem does not exist.

The principle applied recursively

- At the component level: each component models only what is essential to its concept

- At the assembly level: a data model includes only the components essential to the use case, not every component that might apply

- At the deployment level: the generated application exposes only the workflows the client actually performs

Maximalism at any layer destroys the benefit at every other layer. Minimalism compounds.

1.7 Where SDC Fits Core

The SDC specification (currently at the SDC4 reference model generation) is a concrete, open implementation of two-level modeling that:

- Uses XSD 1.1 + Schematron for structural and constraint enforcement

- Uses RDF/OWL for semantic identity and reasoning

- Uses SHACL for graph-level validation

- Uses JSON-LD for serialization that round-trips through both worlds

- Binds CUID2 sovereign identifiers to every component for traceability

- Stores data and constraints together so they cannot drift

Practitioners do not need to teach clients the spec. They need to recognize when a client problem is a two-level modeling problem and prescribe SDC ecosystem tools to solve it.

1.8 What You're Building: A Context Graph with Structural Governance Advanced

The industry is converging on the term "context graph" to describe what the enterprise world needs from AI infrastructure. Foundation Capital called it "AI's trillion dollar opportunity." Neo4j is hosting meetups about it. The buzzword is new. The architecture is not.

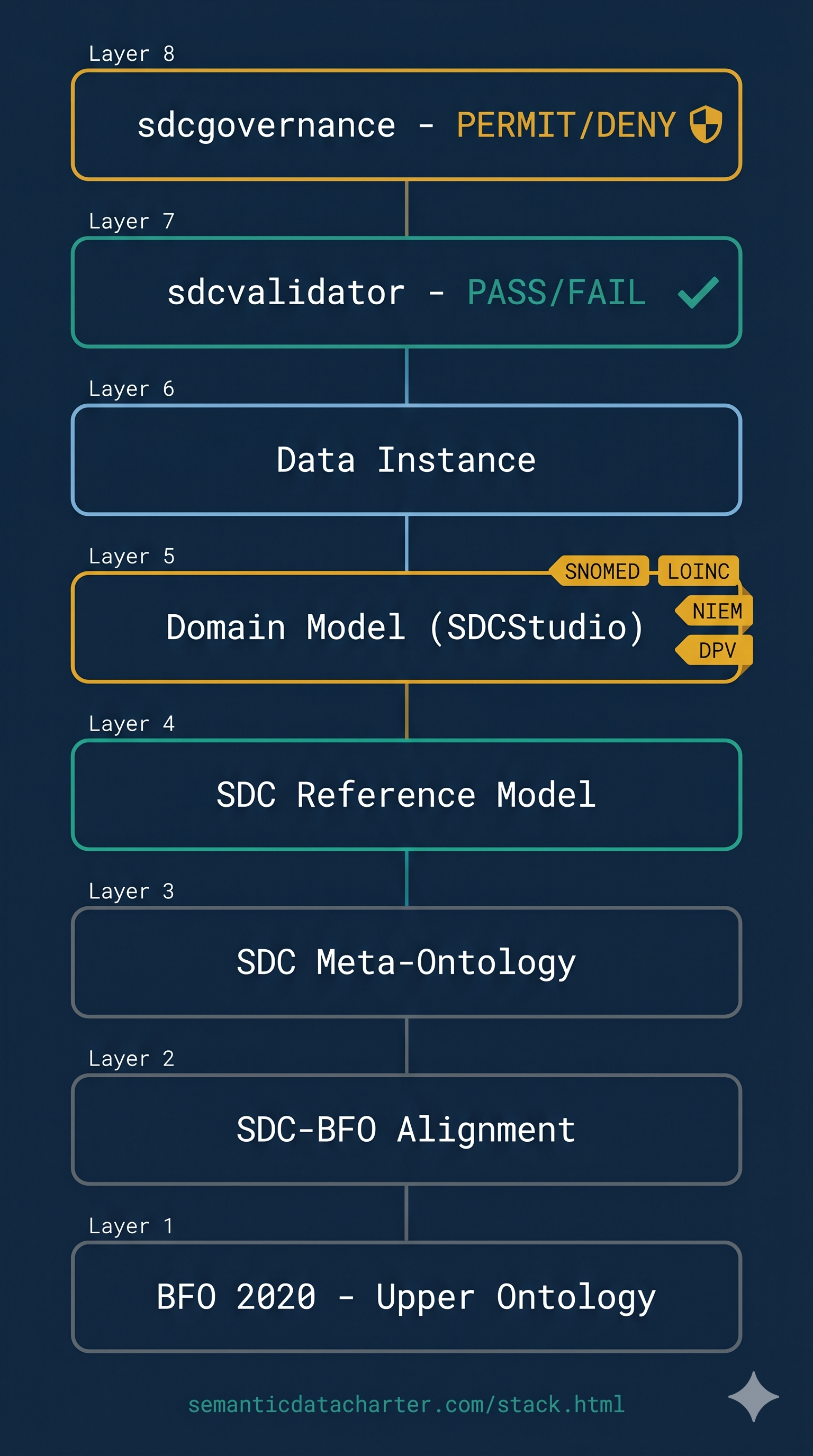

Interactive version: semanticdatacharter.com/stack.html

A context graph is a knowledge graph that contains all of the information necessary to make decisions throughout an organization. Not just the data - the reasoning behind the data, the authority that produced it, the process that governed it, and the provenance that traces it.

When you build an SDC data model, you are building a context graph. Every model automatically generates RDF/OWL/SHACL representations. Every component carries semantic bindings. Every governance element - workflow state machines, attestation models, party/role components, provenance chains - is structurally present in the graph. This is not a separate build step. It is an intrinsic property of the modeling process.

SDC Context Graphs vs. Typical Approaches

- Most context graph approaches build the graph by LLM extraction (probabilistic, lossy, requires continuous human review) or by manual triple authoring (expensive, slow, requires graph specialists). Decision traces are logged after the fact by instrumenting an orchestration layer.

- SDC context graphs are generated deterministically from the data model definition. Decision traces are not logged - they are structurally present in the payload. Governance is not bolted on - it is a property of the data itself. The practitioner's modeling work IS the graph construction.

As Jessica Talisman put it at the inaugural Context Graph meetup: "Context graph is like saying wet water - that's the benefit of graphs." She is right. The industry is discovering what knowledge graphs were always supposed to deliver. SDC delivers it - with governance built in, not added on.

When you talk to clients, use the vocabulary they are hearing from their vendors and conferences: "context graph," "decision traces," "the missing why." Then show them that SDC delivers it structurally, deterministically, and with governance that travels with the data - not in a separate dashboard, not in a vendor's proprietary engine, and not by LLM extraction that requires continuous babysitting.

1.9 Case Study: The $47 Billion Integration Problem Core

(Reference case study - full text in case_studies/integration_cost.html.)

Summary: A 2022 industry analysis estimated US healthcare spends $47B/year on data integration projects that primarily deliver syntactic interoperability. The semantic layer is rebuilt by every vendor, every project, every time. The Maturity Map exists to make this waste visible to a client in 90 minutes.

1.10 Meet Your Client: Atlas Legal Group Core

Throughout this curriculum, you will work with a single fictional client: Atlas Legal Group, a 12-person immigration and small business law firm in the Pacific Northwest. They have the problems every SMB has - client names spelled three different ways across systems, a retiring bookkeeper who is the only person who knows where the data inconsistencies live, a failed $40K vendor migration, and a recent state bar audit finding.

You will meet Atlas Legal's managing partner (Dana Okafor), their informal tech lead (Marcus Chen), the defensive paralegal who built the Access database (Linda Reeves), and the bookkeeper who retires next year (Patricia Kim). You will assess their data, score their maturity, prescribe interventions, deploy SDCforSMB, and build their implementation proposal.

Every concept in this curriculum will be applied to Atlas Legal. When you finish Module 9, you will have a complete, practitioner-quality engagement from intake through proposal - on a case study that mirrors the clients you will actually encounter.

Read the full Atlas Legal intake brief in exercises/case_atlas_legal.html now. You will reference it throughout the remaining modules.

Module 1 Exercise

Open exercises/module_1_exercise.csv - a 50-row customer dataset exported from a fictional CRM. This data has been deliberately seeded with the kinds of problems you will encounter in real client data.

- Part 1 - Semantic Ambiguity (5 min): Identify at least 5 columns or values where the meaning is ambiguous. For each, explain what a receiving system would not know from the data alone.

- Part 2 - Constraint Enforcement (5 min): Identify at least 5 rows where data would fail basic constraint enforcement. What rules should exist but clearly don't?

- Part 3 - Identity Instability (5 min): Identify at least 3 entities that appear multiple times with different identifiers or representations.

- Part 4 - Floor Constraint Application (5 min): Based on what you see in this dataset, assign a working score (Level 1-5) for Schema Integrity, Constraint Enforcement, and Semantic Identity. What is the floor constraint, and what does it cap?

Compare your answers to the reference answer key. Time: approximately 20 minutes.

Module 1 Quiz

0 of 6 answers reviewed

1. A client reports their dashboard "shows different numbers than the source system." Which maturity dimension is most likely the root cause? (See Section 1.1)

Provenance, with Schema Integrity as a contributing factor. The symptom is a lineage failure: the client cannot trace the dashboard number back through its transformations to the source row.

Acceptable alternative: Semantic Identity, if the dashboard and source are joining on different identifiers for the same logical entity.

The wrong answer is "Governance." Governance is what the client wishes they had. It is not the root cause; it is the absence of the layer that would have prevented the cause.

2. True or false: A client at Level 5 Governance with Level 2 Schema Integrity is operating at functional Level 5. (See Section 1.1)

False. The floor constraint principle caps derived dimensions at the minimum of the foundational dimensions. A Level 2 Schema Integrity score caps effective Governance at Level 2.

The Level 5 Governance score is "what the policies aspire to enforce." The Level 2 Schema Integrity is "what the foundation actually allows them to enforce." Report both, and explain the gap.

3. Why is "let ML reconcile it" insufficient for regulatory reporting? (See Section 1.4)

Three reasons:

- Probabilistic matches are not auditable. "The model thought they were the same with 94% confidence" is not a regulatory defense.

- Reconciliation drifts over time. Each retraining changes the answer to historical questions, breaking the principle that historical records validate against the rules in force at the time they were written.

- The reconciliation layer becomes load-bearing infrastructure with no schema. Critical, opaque, and unversioned. A regulatory finding cannot be remediated by editing a policy document.

4. Name three primitives in a reference model. (See Section 1.5)

Any three of: Quantity, Coded Value, Identifier, Time Point, Time Interval, Person, Organization, Location, Document, Event, Observation, Measurement.

A wrong answer is anything domain-specific like "patient" or "invoice" - those are domain entities, not reference model primitives.

5. What is the difference between an XSD schema and a Schematron rule? (See Section 1.7)

XSD defines structural constraints: which elements exist, in what order, with what types and cardinality. "Is this document well-formed against the expected shape?"

Schematron defines content constraints as assertions: "if element A exists, element B must also exist," or "the value of X must be greater than Y."

In SDC they work together: XSD enforces the shape, Schematron enforces the rules. SDC4 uses XSD 1.1 which incorporates some Schematron-like assertions natively.

6. A client's data team has spent two years building a single "Patient" component with 187 optional attributes. What is the most likely consequence, and what would minimum knowledge modeling recommend instead? (See Section 1.6)

Consequence: A maximal model with authoring burden, query complexity, validation difficulty, mapping pain, versioning fragility, and over-fitting to the first deployment.

Minimum knowledge modeling recommends: A Patient component with only 5-10 distinguishing attributes. Separate components for each cluster (PatientAllergies, PatientInsurance, ResearchEnrollment). Composition at use time: a clinic composes Patient + PatientAllergies; a research study composes Patient + ResearchEnrollment. The specificity sits in the composition, not the definition.

Further Reading

- ISO 13606 reference model overview

- openEHR architectural overview

- W3C Data on the Web Best Practices

- The SDC specification (introduction chapter only for this module)